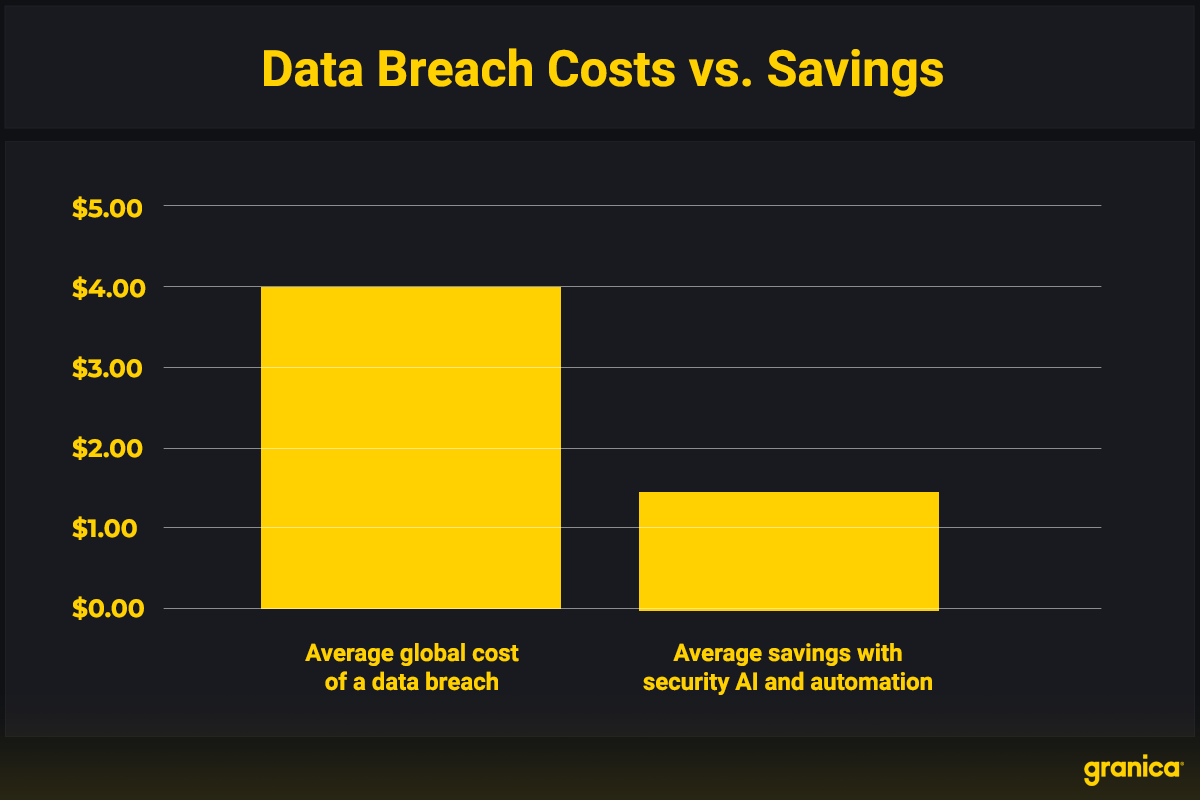

Security breaches have never been so costly. On average, a data breach costs $4.45 million, which reflects a 15% increase since 2020. Some breaches are even more expensive. A 2024 data breach cost UnitedHealth Group $1.6 billion to address. Implementing a robust data security strategy is the best way to reduce the risk of such an expensive violation, both in terms of dollars and data privacy.

Source: IBM’s Cost of a Data Breach Report 2023.

This is especially true for organizations that use generative AI and large language models (LLMs). Lax privilege and privacy policies coupled with poor data visibility can create significant data security vulnerabilities, including breaches of personal identifiable information (PII) from LLMs trained on, or prompted with, data containing such PII. Thankfully, organizations can employ some specific strategies to help mitigate these security risks.

Maintaining an effective data security strategy

The most effective data security strategy will depend on an organization’s specific data usage. No singular, one-size-fits-all solution can prevent every possible data breach. However, most companies can consider the following four strategies as universal best practices for ensuring their data security.

| Essential Data Security Strategies | |

|---|---|

| What | How |

| Limit access |

Using these two data security strategies in tandem shrinks possible attack surfaces and reduces the risk of data leaks. |

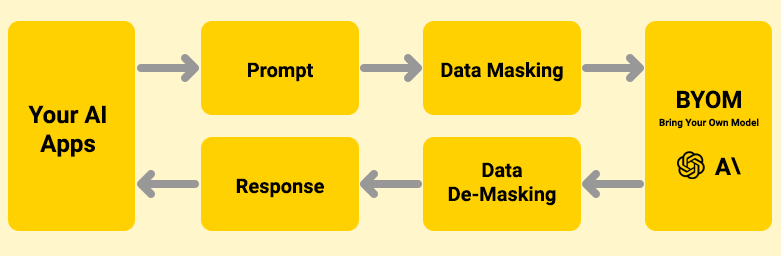

| Protect PII & other sensitive information | Masking LLM training data is an important step in any genAI data security strategy. However, protecting input data in prompts and generated output data in responses is equally important. Organizations can use data privacy tools to mask sensitive information submitted in prompts and protect data as it’s processed. The same tools can protect sensitive response data in a similar fashion. Here’s how it works: 1. Privacy tools automatically detect sensitive information, including data protected under GDPR, CCPA, HIPAA, and other regulations. 1. Organizations can further customize the tool to detect additional types of sensitive data or exclude data known to be non-sensitive. 1. Before users send prompts to an external LLM, the privacy tool first screens them for sensitive data and masks prompts using placeholder data. 1. The LLM processes the masked prompt and formats a response. 1. Before sending a response to the user, the privacy tool screens it and unmasks relevant placeholder data, allowing the user to receive an accurate response.  |

| Prevent data exfiltration | Data security platforms (DSP) and tools ensure organizations can maintain control of all their data. They mitigate different types of data loss, like missing data, information leaks, exfiltration, unauthorized access, and breaches. Some of the most important areas that data security and privacy tools need to protect include:

Organizations often need multiple tools to maintain the most effective data security strategy. |

| Monitor & manage vulnerabilities | Organizations must scan for unsecured ports and ensure every data access point remains compliant with security policies. Frequent software updates will also help fortify companies against breaches. Once identified, these vulnerabilities require a rapid response. Organizations should prioritize assets by vulnerability so they can protect the most sensitive data as quickly as possible. |

With these four strategies firmly in place, organizations may also consider using specialized tools to address other important security issues.

Additional data security strategy tools

- Data encryption tools: These tools prevent unauthorized access by requiring users to have a key or password to access data.

- Backup tools: Essential to preventing data loss, organizations can keep backups secure with tools that limit access rights and store encrypted data in multiple secure locations.

- Firewalls: These tools effectively block users from accessing sensitive information. Cloud-native firewalls are especially useful for protecting data in cloud environments.

- Compliance management tools: Complying with data protection regulations is crucial for sensitive healthcare and financial data. Compliance tools help organizations secure data according to the latest best practices.

Organizations that frequently use genAI or LLM datasets should also prioritize tools that address LLM security concerns and improve trust in AI. Granica Screen is one such tool that can significantly improve AI outcomes while protecting PII.

Build a strong data security strategy with Granica

Granica Screen is a data privacy service that protects sensitive data to make it safe for comprehensive use with AI, from training to inference. It works by classifying and de-identifying data, whether in cloud data lakes or in LLM input prompts and output results. Granica Screen can detect and mask any sensitive information in structured, semi-structured, and unstructured training files. It can also detect and mask sensitive data users send to LLMs in real-time, so the prompt’s intent can still be carried out, but with placeholders instead of sensitive information. These placeholders are unmasked when processing LLM responses, so the end user or application still receives the full intended behavior resulting from their prompt.

Granica Screen’s state-of-the-art accuracy for named entity recognition (NER) sets it apart from other data privacy services. Some tools overmask data, which decreases the accuracy of responses. Likewise, low-recall models risk letting sensitive data slip through.

Granica uses an adaptive NER classification system that learns which data to mask, tokenize, and redact with high precision and high recall. It also supports over 100 languages to ensure global protection. Organizations no longer must choose between data privacy and best-in-class genAI; with Granica, they can have both.

Request a free demo with our data security experts today.