Granica Screen is a data privacy platform that protects natural language processing data and models to make them safe to use – from training and fine-tuning to inference. It discovers harmful and sensitive information in cloud data lake files and input prompts with state-of-the-art accuracy to ensure safe use with both in-house and external AI services.

Powering LLM-based applications with your own data is critical, but also high-stakes. Even small amounts of sensitive or harmful content leakage is a major problem. Which means the highest possible detection accuracy is key — which is exactly what Granica Screen delivers.

Discover and mask sensitive information in end-user and application generated prompts, whether for use with in-house or 3rd-party LLMs. Granica Screen delivers:

Discover and mask sensitive information in training data to ensure it doesn’t accidentally leak at inference time. Granica Screen delivers:

Replace sensitive information with synthetic - realistic but fake - data to improve accuracy and privacy when training, fine-tuning, and inferencing LLMs.

With Granica Screen, you can confidently mitigate data safety and privacy risks to build better AI, faster.

What’s truly meant by the term AI-ready data, and what are the best approaches to successfully deliver AI-ready data aligned to AI use-cases? Download Gartner's latest research report to learn how AI efforts are evolving the data management requirements for organizations, compliments of Granica.

Granica Screen delivers 5-10x higher compute efficiency, which lowers infrastructure cost per byte scanned vs. traditional approaches and enables cost-effective scanning of broad data sets.

The AWS and Google Cloud marketplaces let you quickly and easily utilize Granica Screen while benefiting from simplified billing, as charges are consolidated into your cloud invoice.

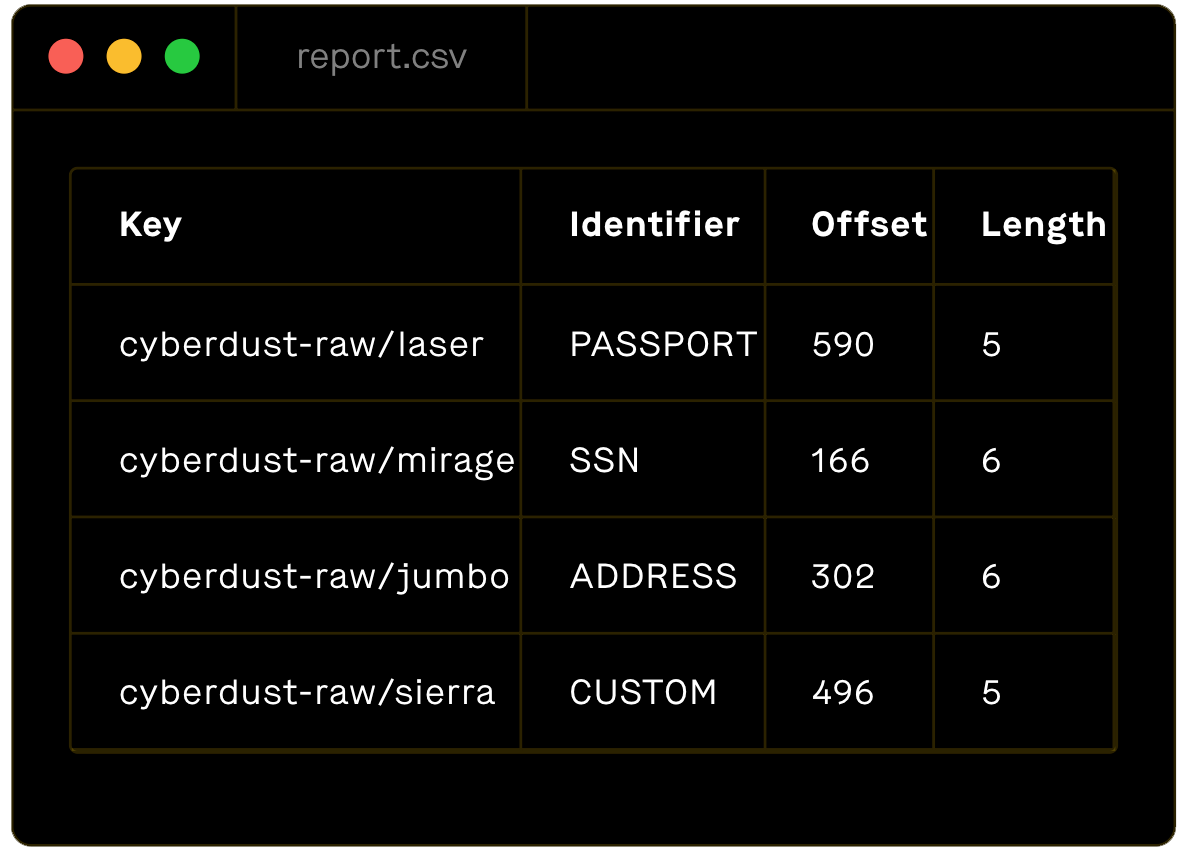

Data types and classifiers supported

Granica supports a wide range of AI/ML/analytics data types and classifiers (e.g., phone number, SSN, VIN, etc.). Bring us your unique requirements, and we can customize it for your use case.

NLP/Text

Clickstream

Logs

Tabular

whitepaper

Read Our Latest White Paper "Achieving AI Security: Guidance and Opportunities for CIOs, CISOs, and CDAOs"

g ~/ granica deploy

Success!

g ~/

No. Granica Screen is typically granted read-only access to private files. It reads and transforms sensitive information such as PII in those files using various de-identification techniques and then stores safe-for-use copies in a separate target bucket.

Granica Screen provides state-of-the-art named entity recognition (NER) accuracy for 50+ entities and global support for 100+ languages. High accuracy is the critical foundation of real data privacy, enabling truly safe and compliant use in ML and generative AI.

Screen is also is highly compute-efficient, lowering the cost to side-scan data by 5-10X, thus increasing the volume of data you can unlock for training by 5-10X at comparable costs. Finally, Granica Screen continuously monitors your data lake to detect and protect sensitive information and PII in new files immediately after they land.

Yes, both products are built on the Granica platform and are fully compatible with one another. You can maximize your benefits by using them together. For example, a common pattern is to first use Granica Screen to generate safe-for-use file copies in a target bucket. Then, using Granica Crunch on that target bucket minimizes the cost of storing and accessing those copies.

Unlock even more data for AI/ML teams to safely improve model performance, whether for private LLMs and generative AI or traditional AI and machine learning. The Granica Screen data privacy platform reduces breach and compliance risks while simultaneously improving the effectiveness and efficiency of downstream AI workflows.